Machine learning is everywhere around us these days, and it’s no surprise that everyone wants to get in on the action. It’s an exciting field with a lot of potential, but it can be difficult to make sense of all the jargon.

To help you out, we’ve compiled a comprehensive glossary of machine learning terms and definitions!

Accuracy

Accuracy is a metric used to assess the performance of a classification model. It represents the proportion of correct predictions made by the model, expressed as a percentage.

Algorithm

An algorithm in machine learning is a set of instructions or a method used to create a model. It involves applying specific procedures to the data, such as linear regression or decision trees, to generate the desired Machine Learning model.

Annotation

Annotation in machine learning involves attaching additional information to the data. Specifically, it is the process of assigning predefined categories or labels to documents and images. This labeled data is then used for training machine learning models, particularly for classification tasks through supervised learning. An example is assigning the digit “8” to an image of a handwritten numeral in a recognition task.

Artificial Neural Network

Artificial Neural Networks (ANNs) are machine learning algorithms inspired by the structure and function of biological neural networks found in animal brains. They mimic the way the human brain processes and analyzes data. ANNs consist of interconnected neurons arranged in layers, performing complex computations to solve problems, much like how humans would approach them.

Related blogs:

AI Consulting: Everything You Need to Know

10 Key AI Trends to Watch Out for in 2024

Attribute

An attribute is a characteristic that describes an observation or instance. In a structured data format like a table, attributes are represented by columns, such as color, size, or weight. For instance, when estimating atmospheric temperature, attributes like atmospheric pressure and wind speed are recorded to determine today’s temperature.

Bias

Bias in machine learning is the presence of errors or faults that cause the machine learning model to deviate from the training set, leading to inaccurate outcomes. It occurs when certain data elements are given higher importance or representation, resulting in systematic prejudice and analytical errors.

Classification

Classification in machine learning is a predictive modeling technique that categorizes data inputs by assigning labels or categories. It utilizes algorithms like logistic regression, Naive Bayes, k-nearest neighbors, and support vector machines to separate inputs into distinct classes. This supervised learning approach enables data classification into binary or multi-class categories based on labeled examples.

Classification Threshold

The classification threshold is a decisive value used to make a specific determination. In machine learning, for instance, if a model predicts the presence of a cat in an image with a certainty of X%, a predefined criterion is set. If the confidence level exceeds 60%, the prediction is considered valid. In this case, the threshold value is 60 for classification.

Clustering

Clustering is an unsupervised learning technique in machine learning that groups unlabeled data based on inherent characteristics. It aims to identify clusters or classes of data points with similar traits while maintaining a distinction between different groups. By maximizing intra-cluster similarities and minimizing inter-cluster similarities, clustering algorithms such as K-Means, Hierarchical Clustering, and Affinity Clustering help uncover patterns and structure within the data.

Continuous variable

Continuous variables are types of variables that can take on a range of values defined by a numerical scale. They include measurements like sales figures or lifespan, which can span a continuum rather than being limited to specific discrete values.

Convergence

Convergence is a stage in training a machine learning model where the change in loss becomes minimal between successive iterations. It signifies that the model has reached a stable state or the minimum position of the loss function. When the change in the cost of the loss function is negligible, it indicates that the model has converged and further adjustments are unlikely to occur.

Deep Learning

Deep Learning is a subfield of machine learning that mimics the functioning of the human brain. It utilizes artificial neural networks to interpret large amounts of structured and unstructured data, identifying patterns and making informed decisions. By learning from vast data sets, deep learning networks improve their accuracy and decision-making capabilities.

Deep learning algorithms, such as perceptrons and multi-layer perceptrons, have gained significant attention due to their success in various domains like computer vision, signal processing, medical diagnosis, and autonomous driving.

Dimension

In machine learning, the concept of dimension differs from its definition in physics. In this context, dimension refers to the number of features present in a dataset. For instance, in object detection, the flattened image size and color channels (e.g., 28x28x3) represent features of the input data. Essentially, dimensionality reflects the number of inputs or features used in algorithms to process and analyze data.

Ensemble Learning

Ensemble Learning is an approach to achieving prediction consensus by combining the distinctive properties of two or more models.

Epoch

In machine learning, an epoch represents one complete pass of an algorithm over the entire dataset. In simpler terms, 1 epoch equals 1 iteration of the algorithm over the complete data set.

Extrapolation

Extrapolation involves making predictions beyond the dataset range. For instance, just because my dog barks doesn’t mean all dogs do too. In machine learning, extrapolating beyond the training data can be problematic.

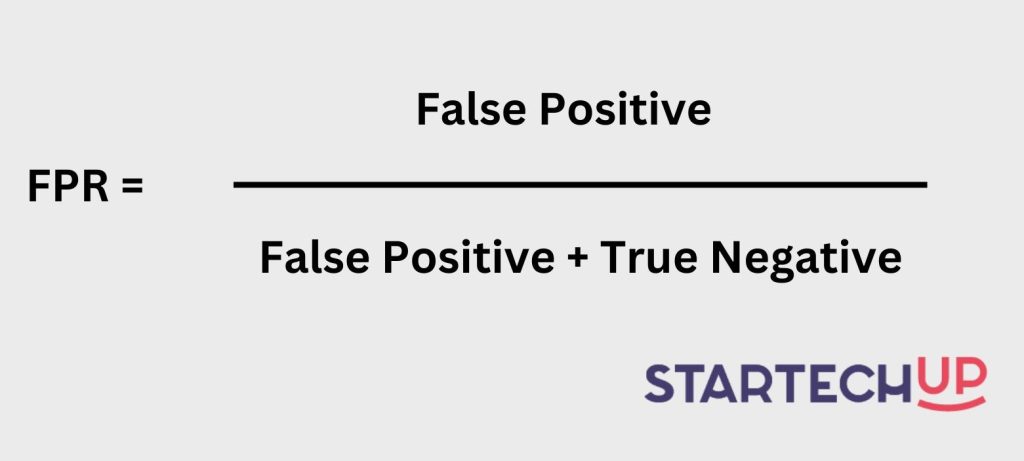

False Positive Rate (FPR)

In machine learning, the False Positive Rate (FPR) is a metric used to evaluate the performance of a classification model. It is computed by dividing the number of false positive predictions by the total number of actual negative instances.

Feature

In machine learning, features refer to the attributes and values used for training, such as “temperature” as the attribute and “25° C” as the corresponding feature.

Feature selection

Feature selection is the process of choosing relevant data for creating a Machine Learning model.

Gradient Accumulation

Gradient accumulation is a mechanism used to split large sample batches for neural network training into smaller mini-batches that run sequentially. This enables the use of larger batch sizes requiring more GPU memory than available.

Hidden Layers

Hidden layers in neural networks refer to the layers that exist between the input and output layers.

Hyperparameters

Hyperparameters are properties of a model that govern its behavior and performance. They are higher-level settings that determine factors such as the speed of learning (learning rate) or the complexity of the model. Examples of hyperparameters include the depth of trees in a Decision Tree or the number of hidden layers in a Neural Network.

Instance

An instance refers to a specific data point or sample within a dataset. It represents a single observation or a row containing feature values. It is synonymous with “observation” and represents a single unit of data within the dataset.

Label

In supervised learning, the label corresponds to the “answer” or the target value associated with an observation. For instance, in a dataset used for classifying flowers into different species based on features like petal length and width, the label would indicate the species of the flower.

Learning rate

The learning rate is a parameter controlling the size of steps in optimization like Gradient Descent. A higher rate covers more ground but risks overshooting the lowest point. Low rates ensure confident steps in the negative gradient direction but require recalculations for longer computation times.

Loss

Loss is a measure of the discrepancy between the true value and the predicted value in machine learning. It is calculated by subtracting the predicted value from the true value. A lower loss indicates a better-performing model unless the model has overfitted to the training data.

Model

A model is a data structure that holds the learned information from a machine learning algorithm applied to a dataset. It serves as the output of the algorithm, capturing the acquired knowledge.

Natural Language Processing (NLP)

Natural Language Processing (NLP) is a subfield of artificial intelligence that focuses on processing human languages. It plays a crucial role in data science and machine learning. Like human conversations, NLP algorithms analyze the syntax (word arrangement) and semantics (meaning of the arrangement) to understand and interpret language accurately.

NLP has diverse applications, such as chatbot services, speech recognition, machine translation, and everyday tasks like search engines and autocorrection features.

Neural Networks

Neural networks are mathematical algorithms that mimic the structure and functioning of the brain. They consist of sequential layers of interconnected neurons, which enable them to analyze and understand complex patterns in data.

Normalization

Normalization rescales feature values to a standard range, commonly used in regression problems to prevent overfitting, improve efficiency, and achieve better performance. By constraining weights in the model and standardizing the dataset, computations are faster.

Noise

Noise is irrelevant or random information in a dataset that obscures underlying patterns.

Overfitting

Overfitting is when a model is too specialized in learning from the training data, resulting in the incorporation of noise and specific details of that dataset. This leads to poor performance on new, unseen data and undermines the model’s effectiveness.

Parameters

Parameters are properties or variables that are learned during the training process of a machine learning model. They are specific to each experiment and are adjusted using optimization algorithms.

Precision

Precision is a performance metric used in binary classification to evaluate how accurately a model identifies positive observations (e.g., “Yes”). In simpler terms, precision answers the question: “When the model predicts a positive outcome, how often is it correct?”

Recall

Recall, also called sensitivity, is a binary classification metric that measures the classifier’s ability to detect positive instances. It answers the question: “How many of the actual positive instances did the classifier correctly identify?”

Regression

Regression in machine learning involves predicting continuous outputs by analyzing the relationships between variables. It enables businesses to make informed decisions based on clear and interpretable data, such as predicting prices or sales using numerical data and regression algorithms like linear models.

Regularization

Regularization is a method used to address overfitting by introducing a penalty for complex models in the loss function, helping to prevent excessive complexity in the learned model.

Reinforcement Learning

Reinforcement Learning is a branch of machine learning where an algorithm learns by trial and error to maximize rewards based on its actions in a given environment. It involves training a model to make a series of decisions, receiving rewards or penalties based on its actions, to maximize the overall reward.

Segmentation

Segmentation is the process of splitting a data set into multiple distinct sets. This division is carried out such that members of the same set are similar to one another yet differ from members of other sets.

Supervised Learning

Supervised Learning is a machine learning method that involves training algorithms using labeled datasets to classify data and make predictions. The labeled dataset provides input-output pairs, allowing the algorithm to learn patterns and predict unseen data. It includes techniques such as regression, classification, and forecasting and employs tools like decision trees for classification tasks.

Test Set

A test set is a collection of data samples that are used to evaluate the performance of a trained machine learning model. It serves as a measure of how well the model can generalize its predictions to unseen data.

Training set

A training set refers to a collection of observations that serve as the foundation for generating machine learning models.

Transfer learning

Transfer Learning is a technique in machine learning where the knowledge gained from training a model on one task is utilized as a foundation for training a model on a different task. Instead of starting from scratch, the pre-trained weights of an existing model are employed, leveraging the previously learned features.

True Positive Rate

In machine learning, the True Positive Rate is also known as sensitivity or recall. It is a performance metric used in binary classification tasks. It measures the ability of a model to correctly identify positive instances from the actual positive examples in the dataset.

Type 1 Error

A type 1 error is called False Positives. Likened in hiring, it occurs when a candidate appears to be a good fit but turns out to be a bad hire.

Type 2 Error

Type 2 errors, also known as false negatives. In this instance, the candidate passed all assessments but was not hired.

Underfitting

Underfitting happens when a model over-generalizes and misses out on relevant variations in the data that add predictive power. You can identify underfitting when a model performs poorly on both training and test sets.

Unsupervised Learning

Unsupervised Learning is a type of machine learning that enables algorithms to learn without the need for explicit instructions or data points. By analyzing and finding structure in unlabeled datasets, these algorithms can discover hidden patterns and generate output without interference.

Validation Set

Observations used during model training to assess generalization beyond the training set. If the training error decreases, but the validation error increases, your model is overfitting, and you should pause training.

Variance

Variance measures how close your predictions are to each other for a given observation. Low variance means consistent predictions, while high variance indicates overfitting and too much focus on noise in the training set.

Do You Need Machine Learning Services?

Now that you know all the buzzwords, why not work with a team of experts to help you leverage the power of machine learning?

At StarTechUP, we specialize in helping businesses develop custom Machine Learning solutions tailored to their unique needs. We also offer mobile app and web development services to help you get the most out of your machine learning models.

Contact us today for more information!